Optimal Group Size for Rifles

By Damon Cali

Posted on April 01, 2015 at 02:57 PM

Disclaimer: I am not a professional statistician. In fact, I barely qualify as competent in the field. So if you are, and see any conceptual errors in this article, please contact me and I will get those clarified ASAP. That said, I've stood on the shoulders of smarter men for this article, so I'm pretty confident that any problems with it are minor. Now, on with the article...

If you ask a shooter how accurate his rifle is, he'll surely tell you. But how does he know? Usually, it's because he shot a few groups and measured them. But is that all there is to the story?

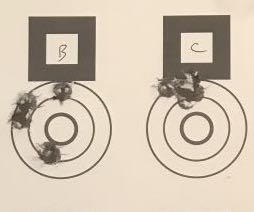

Three-Shot vs. Five-Shot Groups

Check out just about any internet forum on the topic of precision rifles and you will see folks making accuracy claims based on three-shot groups. You will then almost universally see others stating that three shot groups are not good enough. Five is better and 10 is best. So what's the truth here? How do you know if your rifle really is "1 MOA all day long" or if you just shot an unusually small group? To answer that, we need to figure out what it is we are measuring in the first place.

What we're really after is some measure of how likely our shots are to hit where we are aiming. (For the purposes of this article, ignore wind, shooter error, and things like that - we're talking only about the inherent precision of the rifle/ammunition system). If 99 out of 100 shots land within a 0.5 MOA circle, I think most people would be comfortable calling that a 0.5 MOA rifle. But do you really need to shoot 100 shots per load to determine it's accuracy potential? No, you don't. At least not if you're willing to accept a little (more) uncertainty.

Bullet impacts can be considered random events. Them more you shoot, the bigger your group will be. So it's not surprising to see some scoffing at 3-shot groups. After all, in F Class, for example, you might shoot 3 20-shot relays in a match. So we need to accept that there is no "true answer" to the question of how accurate (or more correctly, how precise) a rifle is. You can only speak in terms of probabilities.

But let's back up a bit and talk about something more concrete. Forget about probabilities and percentages, how do we measure a single group?

Group Size Measurement Methods

The most common method is to measure the center-to-center distance between the two widest shots in the group. This is commonly called the Extreme Spread method. It's very easy to do. But what about all those shots in the middle? Aren't we just ignoring them? Yes and no. True, we aren't measuring them individually, but we do know that they're all better shots than the two we did measure and that's valuable information. But yes, those interior shots contain some information that we're not using.

Another way to measure might be to measure the radial distance from the center of the group to each shot's center point. We could average those (this is known as the Mean Radius method), or take the standard deviation (this is known as the Radial Standard Deviation method). These methods don't ignore any data, but at a great cost - we now have to measure the x and y coordinates of every single shot (and we have to do calculations afterwards). For a ten shot group, that's 20 measurements, compared to one measurement for the Extreme Spread. Computer vision software or electronic targets can make this easier, but for the average shooter at the range, it can add a lot of time. So it would be nice to know if it's worth it.

There are actually quite a few of these methods that have been worked out by scientists, engineers, and statisticians over the years. I will skip many of them because they're not very useful. But there is one more I'd like to mention because it turns out to be interesting. It's called the Figure of Merit, and is simply the average of the horizontal extreme spread and the vertical extreme spread. Measuring the Figure of Merit for a group therefore requires two measurements and a very simple calculation. As you might suspect, this keeps just a little more information than the Extreme Spread method, but less than the "measure every shot" methods. The effort required is a little more than the Extreme Spread method requires, and quite a bit less than the "measure every shot" methods.

If you are interested in this sort of thing, I recommend purchasing a booklet first published in 1964 by Frank E. Grubbs: Statistical Measures of Accuracy For Riflemen and Missile Engineers. It's an old book that describes the various methods and compares them in terms of statistical efficiency. It is definitely a math book, but one that should be accessible to anyone with a basic background in statistics.

Optimal Group Size

I discovered Grubbs' book after seeing an article about optimal group sizes by Geoffrey Kolbe. I had never really thought about it before, and always just assumed more shots were always better. It turns out that that is not always true.

In fact, in a footnote at the beginning of Grubbs' book, he notes, with little explanation:

"For the range, for example, the best group size is about eight."

By range, he meant, I presume, extreme spread. In any case, my curiosity was engaged and I decided to reproduce the analysis given on the above website, but with a little more detail.

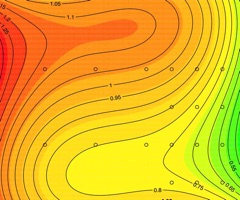

The first question to be answered by using the methodology described in Kolbe's article is to determine which of the above methods are best, and by how much.

First, I defined the confidence and tolerated error I was willing to accept. I chose a confidence level of 90%, which means that 90% of the tests I shoot will fall within the error I deem tolerable. By "test" I mean the average of a series of groups. I chose a tolerated error of 20%. For example, if I have a rifle who's true average group size is 1 MOA, 9 out of 10 tests will yield averages between 0.8 and 1.2".

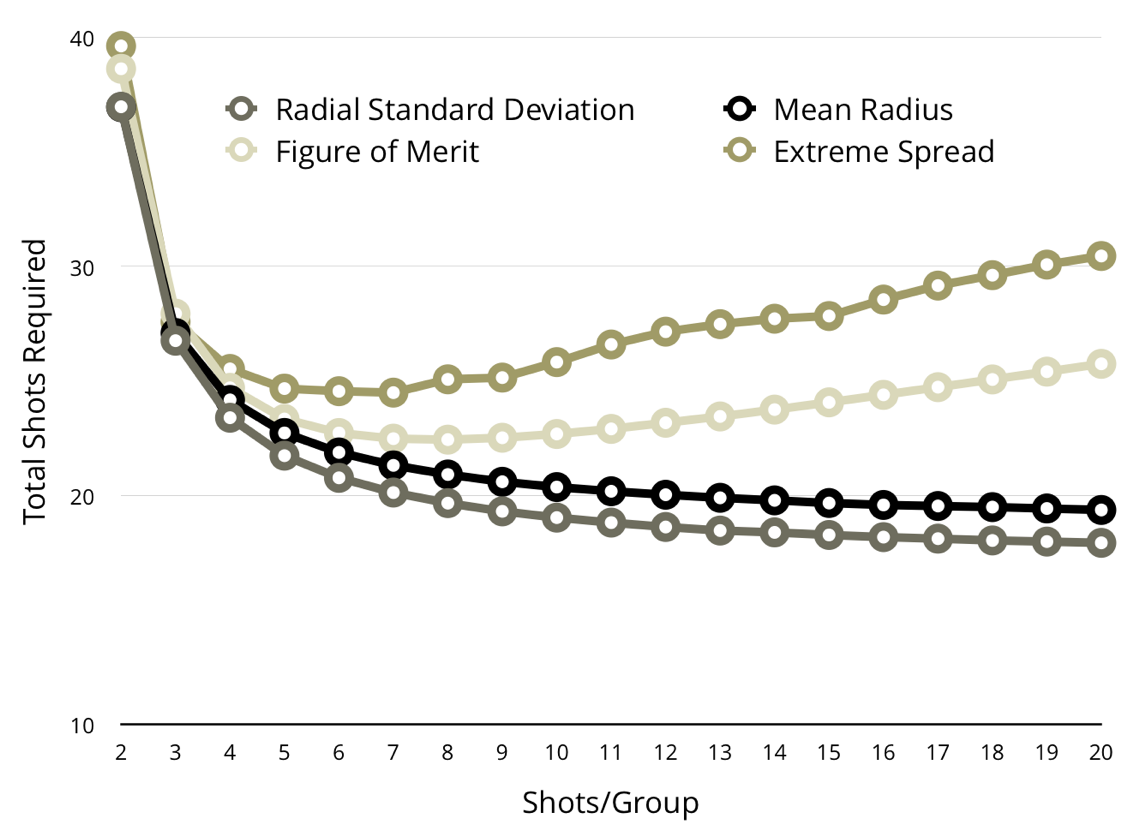

So how many shots must be fired in one of these tests? It depends on the group size and is plotted in the chart below.

You'll notice a couple of interesting things here. First, the methods that only measure extreme spreads (Extreme Spread and Figure Of Merit) have optimal group sizes of about 7, while the methods that require measuring every shot show increasing efficiency as the group size goes up. This is a reflection of the "ignored" data in large groups when only taking measurements of the outermost shots. Shooting 20-shot groups is even worse than shooting 3-shot groups in terms of efficiency.

You will also see that the methods that require measuring every shot are superior to those that don't. They require fewer shots fired to get the same confidence. Similarly, the Figure of Merit method is better than the Extreme Spread method. It's not surprising that the amount of information gathered is a good indicator of the method's efficiency.

You can also see quantitatively how much work/ammunition you can save by using theses methods. If anything, I'm surprised by how little you gain by changing from the worst method (Extreme Spread) to the best (Radial Standard Deviation). Those more complex methods are best left to ballistics labs who are burning up lots of ammo, and who can therefore appreciate the cost savings.

Practically speaking, the only two that make sense for the average shooter are Extreme Spread and Figure of Merit. And I personally don't see enough gain to justify the additional work involved in the Figure of Merit at this level of confidence.

Finally, the astute reader will notice that the required number of shots may or may not be a multiple of the group size. This is true. Practically speaking, we can't shoot 4.5 groups. Only 4 or 5. If we restrict ourselves to the nearest whole numbers of groups, the chart gets a little lumpy. This is because we are no longer dialed into 90% confidence and 20% error. This lumpiness obfuscates the information when shooting small numbers of groups, so we'll stick to the smoothed version.

In either case, the optimal group size is about 7 for the Extreme Spread and Figure of Merit methods, and bigger groups are always better for the "measure every shot" methods.

Practical Takeaways

A few three shot groups is just not going to get you any confidence in your rifle's accuracy. To get what I would consider decent confidence, you really need to shoot at least 20-30 rounds per load. That will get you within 20% of the true group size with 90% confidence.

Handloaders should shoot 7-shot groups. You don't lose much information by shooting 5-shot groups, so that is a good option for those testing factory ammo (which typically comes in boxes of 20).

In no situation should you ever use 3-shot groups to get an indication of accuracy. It's just not an efficient way to spend your money or your time.

If you have more time than money, consider using the Figure of Merit (the average of the vertical and horizontal extreme spreads) to evaluate your groups since it's a little more efficient than the Extreme Spread.

Update

Update June 24 2018: A real statistician contacted me and informed me that the work done back in the day done by Gubbs is not quite correct. Grubbs had to rely on the technology of the day to generate his samples, and the sample size was not adequate to perfectly represent the distribution. Modern repeats of the same method using vastly more samples find that the curves are shifted slightly and that the optimal group size is closer to 5 than 7. Check out the details at Ballistipedia.

Damon Cali is the creator of the Bison Ballistics website and a high power rifle shooter currently living in Nebraska.

The Bison Ballistics Email List

Sign up for occasional email updates.

Want to Support the Site?

If you enjoy the articles, downloads, and calculators on the Bison Ballistics website, you can help support it by using the links below when you shop for shooting gear. If you click one of these links before you buy, we get a small commission while you pay nothing extra. It's a simple way to show your support at no cost to you.